Luma AI Launches Text to 3D Model

Were you amazed by the interview with Kaedim and how they turn images to 3D models? Well, this is next level. By modifying Luma AI’s Neural Radiosity Field (NeRF) AI-based 3D scanning technology, this new approach introduces a completely new method of creating 3D models: by merely typing a sentence.

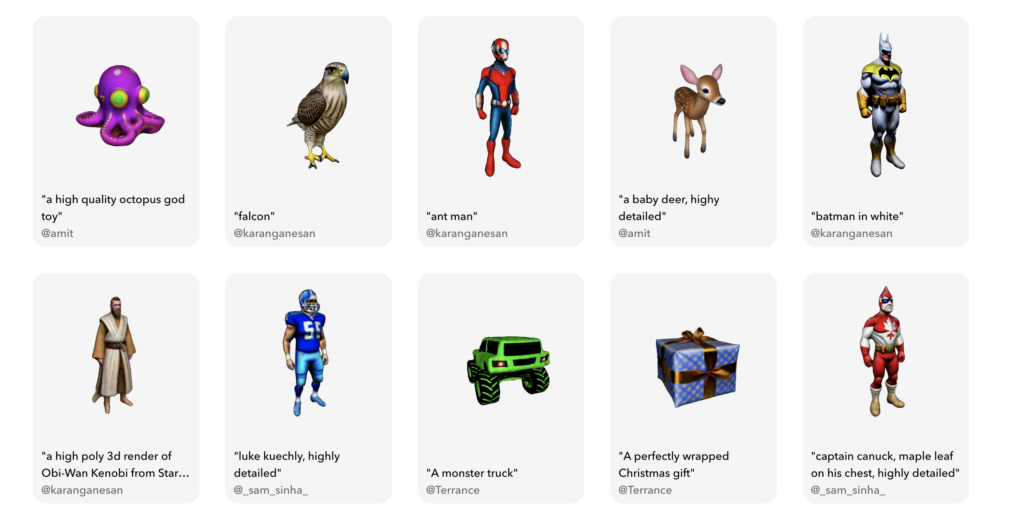

With Luma AI’s Imagine 3D, you can type in a sentence and the system generates a fully solid 3D model with a full-color texture. This technology is similar to recently popular text to Image systems such as DALL-E 2, Midjourney, and Stable Diffusion, but instead of producing 2D images, Imagine 3D produces 3D models.

While the 3D models produced by Imagine 3D so far are crude, Luma AI has successfully produced a system that can generate relatively competent 3D models from a mere text prompt. And this is just the beginning. As the technology improves, and as other companies begin to adopt and refine the concept, we’ll see an increasing number of high-quality 3D models produced on demand, with no need for 3D modeling software. All you’ll need is a suitable prompt and your imagination – and, most likely, a subscription to the provider.

The implications of this technology are vast. Products with similar capacity can become game-changing for industries such as architecture, product design, and video game development, by making it easy to create 3D models, with short lead time and less manual work. Even if the applications in their current state leave a lot to ask for, text or image to 3D software surely has the potential to change the way we create and use 3D assets forever. Read more about generating 3D models with AI in this interview with Kaedim or this piece about Open AI:s Shap-E.

ASK OUR AI ABOUT THE FUTURE OF 3D